The PandaProbe dashboard gives you several ways to create evaluation runs without writing code. You can evaluate directly from the data you are already inspecting, or create broader filtered eval runs from the Evaluations tab. Use the dashboard when you want to:Documentation Index

Fetch the complete documentation index at: https://docs.pandaprobe.com/llms.txt

Use this file to discover all available pages before exploring further.

- Evaluate selected traces or sessions during review

- Create a one-off eval run with filters and sampling

- Choose metrics visually

- Review run status, scores, reasons, and metadata from the dashboard

Create evals from Traces tab

Use the Traces tab when you already know which traces you want to evaluate.Choose traces to evaluate

Select a batch of traces from the table and click Evaluate, or open a specific trace and click Evaluate from the trace detail view.

Configure the eval run

In the sidebar, enter a run name, select one or more trace-level metrics, and optionally choose the model used for LLM-as-judge evaluation.

Create evals from Sessions

Use the Sessions tab when you want to evaluate complete agent lifecycles. The workflow is the same as trace evaluation: select sessions from the table, or open a session detail page and click Evaluate.Choose sessions to evaluate

Select a batch of sessions from the table, or open one session and click Evaluate.

Configure the eval run

In the sidebar, enter a run name and select session-level metrics such as

agent_reliability or agent_consistency.Optionally customize signal weights

For session evaluation, you can use Customize signal weights to adjust how much each trace-level signal contributes to the session score.

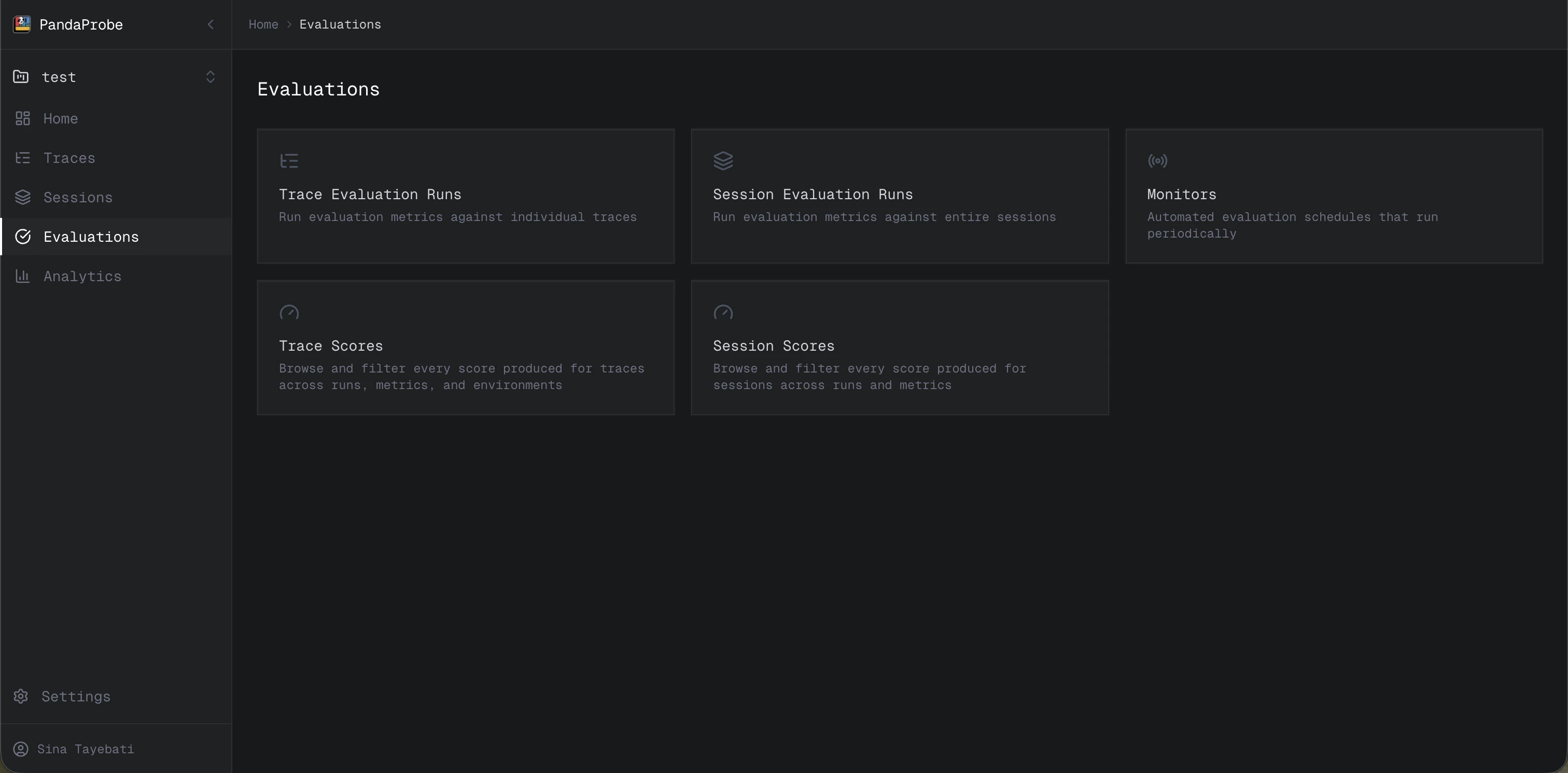

Create evals from Evaluations tabs

Use the Evaluations tab when you want to create an eval run from filters rather than manually selecting traces or sessions. When you open Evaluations, you will see five cards:- Trace evaluation runs

- Session evaluation runs

- Monitors

- Trace scores

- Session scores

Trace evaluation runs

Open Trace evaluation runs when you want to evaluate traces selected by filters.Configure the run

Add a name, select trace-level metrics, and optionally select the model used for LLM-as-judge evaluation.

Add filters

Use filters such as Started after, Started before, Status, Trace ID, Session ID, and Tags to define the traces you want to evaluate.

Set the sampling rate

Set Sampling rate to choose what portion of matching traces should be evaluated. For example,

0.25 evaluates 25% of traces that match your filters.Session evaluation runs

Open Session evaluation runs when you want to evaluate sessions selected by filters.Configure the run

Add a name, select session-level metrics, and optionally customize signal weights.

Add filters

Use filters such as Started after, Started before, Session ID, User, Tags, and other session filters to define the sessions you want to evaluate.

Set the sampling rate

Set Sampling rate to choose what portion of matching sessions should be evaluated.

Review eval results

After an eval run starts, PandaProbe processes it in the background. You can review progress and results from the Evaluations tab:- Trace evaluation runs shows trace eval run status and history.

- Session evaluation runs shows session eval run status and history.

- Trace scores lets you inspect scores attached to traces.

- Session scores lets you inspect scores attached to sessions.

Next steps

Scheduling Evaluations

Automate recurring evaluations with monitors.

Run Evaluations via API

Create eval runs programmatically.